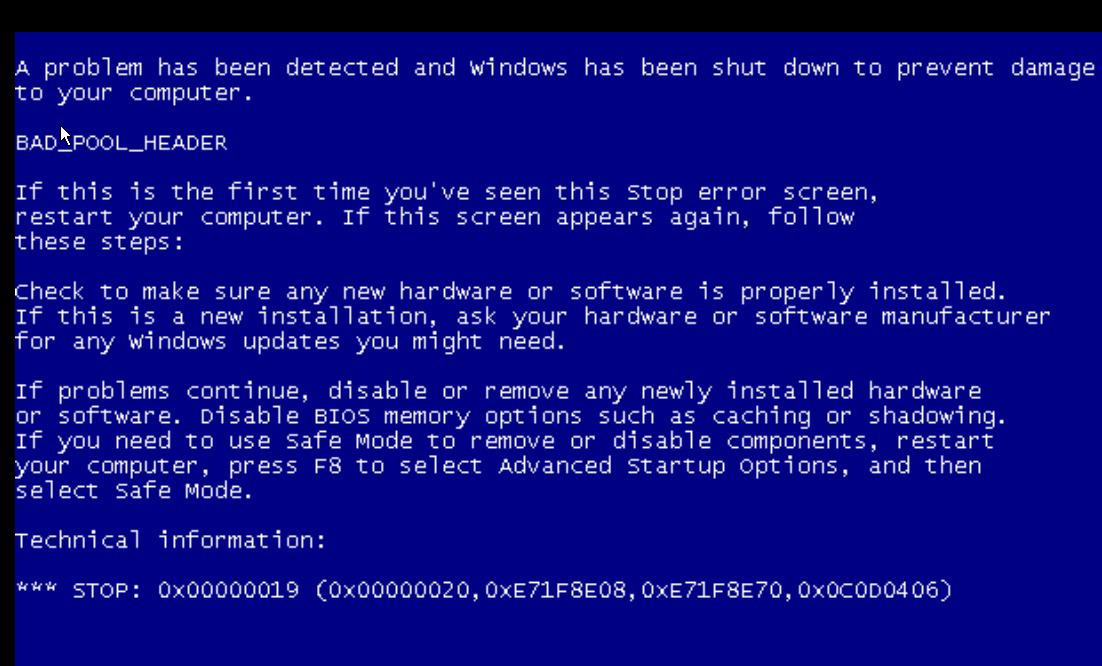

The load disappears from the network, and the processing moves from the data center to the endpoint. Horizon Client draws over the Microsoft Teams window in the virtual desktop VM, giving users the impression that they are still in the VM, but the media is actually traveling directly between the local endpoint and the remote peer (as shown in Figure 1). With the supported Horizon Agent and VMware Horizon® Client versions, when a user starts a call inside the virtual desktop, a channel to the local physical device is opened and the call is started there. VMware, working closely with Microsoft, supports Media Optimization for Microsoft Teams with Horizon 8 (2006 and later) and Horizon 7 version 7.13. At the same time, the virtual desktop is capturing the video feed and sending it back over the network, using the VMware Blast display protocol, to the endpoint so that the end user can see the video feed. But the RTAV feature still sends a lot of data across the wire, and the virtual desktop has to process the data and send it out over the network to complete the call. VMware Horizon® sends that data compressed, using our real-time audio-video (RTAV) feature. When the call is initiated in the virtual desktop, the user’s microphone and camera send the user’s voice and image to the virtual desktop.

Making a video call from a virtual desktop can be tricky. You also need to to create a file /etc/ld.so.conf.d/pipewire-jack-x86_64.Technical Overview of Media Optimization for Microsoft Teams JACKĪt this time there are still package dependency issues with JACK but the only package you need is pipewire-jack-audio-connection-kit.

Vmware free version mic support Bluetooth#

If you're having trouble have a look at the Bluetooth and the Bluetooth Headset section of the Archwiki it may be for Arch but most of it translates to all of Linux. The best Bluetooth codec should automatically be chosen however the config file has a codec selection to work around bugs either in PipeWire, Bluez, or with headsets.

The rest of the configuration option are in nf. Bluetoothīluez should now be enabled by default in nf. May also want to check the comments in this thread for a configuration beyond the defaults.ĭefault configuration files can be found here. These options change the PipeWire and PulseAudio application latency and can be set in /etc/environment, or ~/.bashrc depending on distribution. Limits can be configured in /etc/pipewire/nf with the lines: The minimum of the requested buffer size is used. PipeWire will automatically select the lowest requested buffer size for the graph. The plan is to use an environment variable to override the options. It's not possible to configure this option yet for all streams. Set the resampler quality of a stream by changing the resample.quality property. The resampler quality of nodes can be changed in the nf config file, see below. Changing the quality uses more CPU power with (arguably) little measurable advantages. PipeWire uses a custom highly optimized and reasonably accurate resampler. This does nothing when the device supports the same sample rate of the DSP graph. To convert the DSP sample rate to a supported device sample rate.This will do nothing when the client has the same sample rate as the graph. To convert the client sample rate to the DSP sample rate of the graph (See previous topic).Resampling is performed in 2 places in PipeWire. In later versions, alternative sample rates will be made available with automatic switching depending on client demands. You can change the sample rate in /etc/pipewire/nf.

All signals are converted to this sample rate and then converted to the sample rate of the device. PipeWire currently has one global sample rate used in the processing pipeline. The clients can be configured with either client specific options and in some cases with environment variables for some aspects. Run pipewire-media-session -h for some of the options. All other variables are set in nf, nf and nf in the /etc/pipewire/media-session.d directory. The session manager has no configuration options except for enabling/disabling modules. It has some basic configuration for the graph scheduling settings and what modules to load by default. PipeWire daemon configuration is performed with /etc/pipewire/nf. Depending on the client API used, some extra configuration is possible as well. It typically loads the alsa devices and configures the profiles, port volumes and more.Ĭlients that join the graph are first configured and then connected to other target nodes as defined by the policy in the session manager. If a configuration option is not yet available, it's a bug that should be fixed.Ī large part of the initial configuration of the devices is performed by the session manager. One of the design goals of PipeWire is to be able to closely control and configure all aspects of the processing graph.